Enterprise teams using aggregators such as AI Fiesta risk massive exposure under the EU AI Act and US state laws. One unlogged routing to a frontier model in a sensitive workflow can trigger audits, bans, and fines exceeding subscription costs by orders of magnitude.

Governed deployments, however, convert these platforms into audit-ready productivity engines.

THE REGULATORY LANDSCAPE

EU AI Act treats aggregators as Providers when they integrate and distribute models commercially (Art. 3). Systemic-risk models face documentation, testing, and reporting duties (Art. 51–55). High-risk use cases (Annex III) require conformity assessments and human oversight (Art. 14).

Colorado AI Act demands impact assessments for consequential decisions. California enforces output watermarking and bias audits. New York targets employment tools. ISO/IEC 42001 requires formal AI risk management systems.

PRACTITIONER’S GUIDE

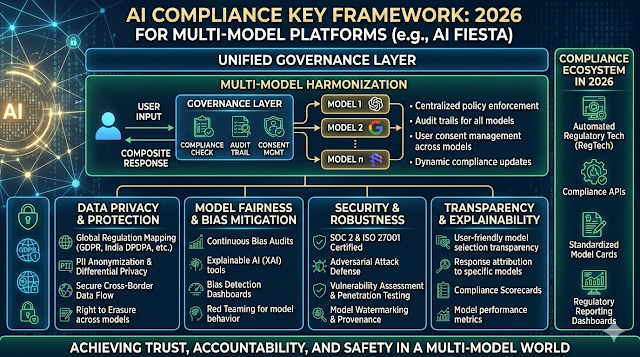

Deploy compliantly with these five steps:

- Map every use case against Annex III and state high-risk lists.

- Classify yourself as a Deployer; demand the vendor’s EU Declaration of Conformity and systemic risk summary.

- Enforce mandatory output labelling (model name, version, watermark) and 6-year prompt-response logging.

- Test quarterly for bias and robustness across all routed models.

- Monitor upstream model changes and re-assess risk within 14 days.

THE "LIABILITY" ANGLE

Providers face fines up to €35M or 7% global turnover for integration failures. Deployers carry liability for misuse, poor monitoring, or missing oversight; fines reach €15M or 3% turnover. Vendor disclaimers rarely absolve deployer obligations under Art. 29.

REAL-WORLD CASE SCENARIO

An EU insurance company routes claims processing through AI Fiesta without model-specific logs. A denied claim sparks a discrimination complaint. Regulators discover absent transparency records and no deployer risk assessment. Result: €1.9M fine and immediate suspension of the tool.

COMPLIANCE FAQ

Am I a Provider if I use AI Fiesta commercially?

Usually, you remain a Deployer with monitoring, logging, and transparency duties.

How do I manage bias differences between routed models?

Run parallel adversarial tests; restrict or gate high-variance models in sensitive domains.

What must I obtain from the aggregator vendor?

Declaration of Conformity (if issued), technical summary, systemic risk report, and change-notification SLA.

Does the platform’s prompt optimiser satisfy compliance?

No, it improves UX but does not fulfil deployer obligations for assessments or labeling.

Conclusion

Skipping governance on multi-model aggregators invites enforcement that wipes out years of efficiency gains. Fines, remediation, and lost trust cost far more than any monthly fee. Classify use cases, enforce logging, and test rigorously now, or become the 2026 compliance cautionary tale.