Artificial intelligence has become one of the most valuable technologies in the modern world. As companies race to build advanced AI models, data has become the most important resource behind these systems. Because of this, concerns have grown in the United States about how sensitive AI data could be accessed or exploited by foreign actors.

One issue that often appears in policy discussions and cybersecurity reports is the possibility of AI data theft or unauthorised access involving Chinese entities and U.S.-based companies. Governments, researchers, and security experts have raised concerns about intellectual property theft, cyber espionage, and data misuse connected to the global AI race.

This article explains how AI data from U.S. companies could potentially be accessed or stolen, the methods experts warn about, and why it has become a major topic in technology and national security discussions.

Why AI Data Is So Valuable

Artificial intelligence systems depend on massive amounts of data. This data is used to train models so they can recognise patterns, generate text or images, and make predictions.

For example, AI companies rely on datasets that include:

-

Large collections of text, images, and videos

-

User behaviour and interaction data

-

Proprietary research data

-

Business analytics information

-

Software code and algorithms

These datasets can take years to build and may cost companies billions of dollars. Because of this, AI training data and model designs are considered valuable intellectual property.

If competitors gain access to this information, they could potentially replicate technologies without the same level of investment.

Growing U.S. Concerns About AI Security

Over the past decade, U.S. government agencies and cybersecurity experts have warned about foreign efforts to obtain sensitive technology and research data.

Artificial intelligence is now part of a broader strategic competition between major global powers.

In response, the United States has introduced measures such as:

-

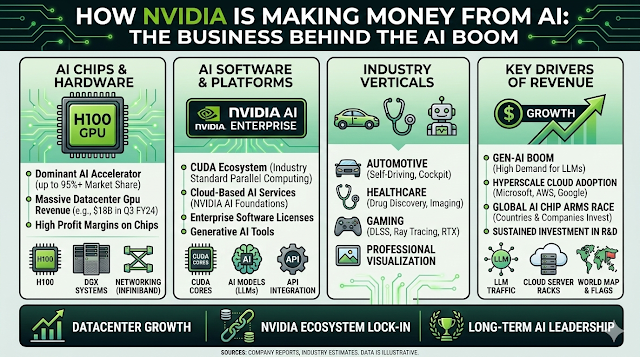

Export controls on advanced AI chips

-

Restrictions on certain technology partnerships

-

Increased scrutiny of foreign investments in tech companies

-

New cybersecurity standards for sensitive data

These steps aim to protect intellectual property and prevent sensitive technologies from being misused.

Common Methods Used to Access AI Data

Experts say that if AI data is accessed without authorisation, it often happens through a combination of cyber techniques, insider access, and supply chain vulnerabilities.

Below are some of the methods frequently discussed in cybersecurity reports.

1. Cyber Espionage and Hacking

One of the most widely reported methods involves cyber attacks targeting technology companies and research institutions.

Hackers may attempt to gain access to:

-

AI training datasets

-

proprietary algorithms

-

research documents

-

cloud storage systems

-

internal development tools

These attacks often involve techniques such as:

-

phishing emails

-

malware

-

network intrusion

-

credential theft

Once inside a system, attackers may attempt to copy or download sensitive information related to AI development.

Cybersecurity agencies in several countries have reported incidents where advanced hacking groups targeted companies working on emerging technologies.

2. Insider Access and Employee Recruitment

Another risk involves individuals who already have access to company systems.

Employees, contractors, or researchers working at technology firms may have access to valuable AI development resources.

In some cases, insiders might:

-

transfer proprietary code or data

-

download confidential research

-

Share internal information with outside organisations

Because of this risk, many companies implement strict security controls, such as:

-

limited access permissions

-

activity monitoring

-

internal security audits

These measures help reduce the chances of sensitive information leaving the organisation.

3. Academic and Research Collaboration Risks

Artificial intelligence research often involves collaboration between universities, research labs, and technology companies around the world.

While international cooperation drives innovation, it can also create potential security challenges.

Concerns sometimes arise when:

-

Research partnerships involve sensitive technologies

-

Data sharing agreements are unclear

-

Intellectual property protections are weak

Governments and institutions now pay closer attention to technology transfer risks in international research partnerships.

Many universities have introduced stricter guidelines for protecting research data.

4. Supply Chain and Hardware Vulnerabilities

AI systems rely on complex global supply chains involving hardware, software, and cloud infrastructure.

Security experts warn that vulnerabilities in supply chains could expose sensitive data.

For example, risks could emerge from:

-

compromised software libraries

-

insecure third-party tools

-

vulnerabilities in hardware components

-

cloud infrastructure misconfigurations

Companies now conduct supply chain security audits to ensure that external vendors and partners follow strong security practices.

5. Data Scraping and Public Data Harvesting

Another method sometimes discussed is large-scale scraping of publicly available information.

AI companies often collect large datasets from the internet to train models.

However, other organisations may also scrape online sources such as:

-

websites

-

academic publications

-

public databases

-

open-source code repositories

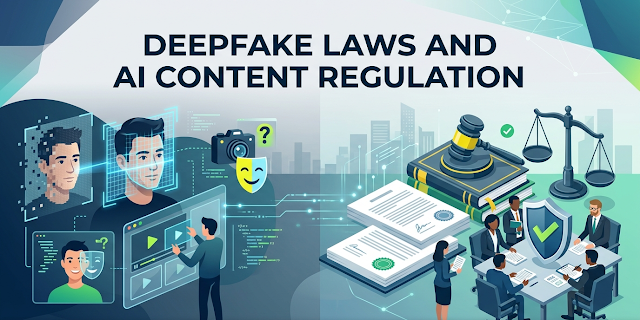

While collecting publicly available data is legal in many cases, disputes can arise when companies believe their proprietary content has been used without permission.

This issue has become a major debate in the AI industry.

Real-World Examples and Policy Responses

Concerns about technology transfer and intellectual property theft are not limited to AI. They have been part of broader technology disputes between the United States and China for years.

Several high-profile cases have involved allegations of:

-

corporate espionage

-

stolen semiconductor designs

-

unauthorised use of proprietary code

In response, the U.S. government has introduced policies aimed at protecting critical technologies.

Examples include:

-

restrictions on advanced semiconductor exports

-

expanded cybersecurity cooperation between the government and private companies

-

stronger enforcement of intellectual property laws

These policies aim to prevent sensitive technologies from being accessed by unauthorised entities.

What U.S. Companies Are Doing to Protect AI Data

To protect valuable AI assets, many technology companies have strengthened their cybersecurity strategies.

Common protection measures include:

Advanced Cybersecurity Systems

Companies deploy sophisticated monitoring tools that detect suspicious activity across networks.

Zero-Trust Security Models

Access to sensitive systems is limited and continuously verified to reduce insider threats.

Encryption and Data Protection

Sensitive datasets are encrypted to prevent unauthorised access.

AI Security Audits

Organisations regularly audit AI models, data pipelines, and infrastructure to identify vulnerabilities.

These steps help reduce the risk of data leaks or unauthorised access.

The Global Competition for AI Leadership

Artificial intelligence has become a major strategic priority for governments around the world.

Countries including the United States, China, the European Union, and others are investing billions of dollars in AI research and development.

This competition is driving:

-

rapid innovation

-

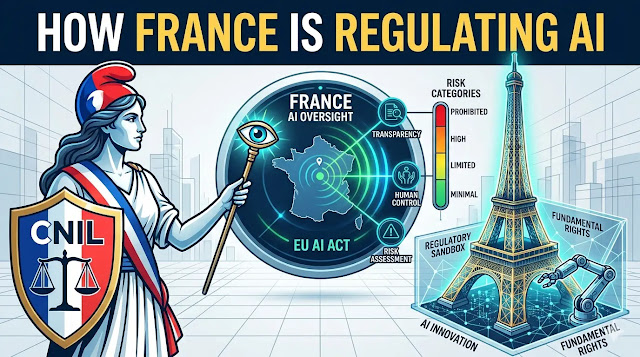

new technology regulations

-

increased cybersecurity protections

At the same time, experts emphasise that international cooperation remains important for solving global challenges such as healthcare, climate change, and scientific research.

Balancing competition and collaboration will likely shape the future of the AI industry.

Conclusion

Artificial intelligence has become one of the most valuable technologies of the 21st century, and the data behind it is extremely valuable. Because of this, concerns about AI data security and intellectual property protection have grown significantly.

Cybersecurity experts warn that unauthorised access to AI data could occur through hacking, insider threats, research collaboration risks, supply chain vulnerabilities, or large-scale data scraping.

In response, U.S. companies and government agencies are strengthening cybersecurity measures and introducing new policies to protect critical technologies.

As AI continues to reshape industries and economies, safeguarding data and intellectual property will remain a crucial part of the global technology landscape.