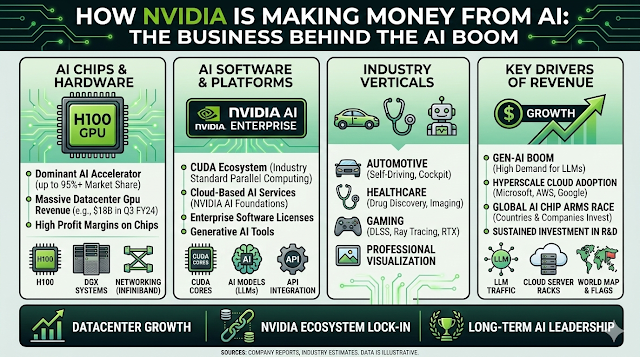

Artificial Intelligence is transforming industries around the world, and one company sits at the centre of this revolution: Nvidia. Over the past few years, Nvidia has become one of the most valuable technology companies globally, largely because of its critical role in powering AI systems.

But how exactly is Nvidia making money from AI? The answer goes beyond simply selling computer chips. NVIDIA has built an entire ecosystem of hardware, software, and services that enable companies to develop and deploy artificial intelligence at scale.

In this article, we break down how Nvidia makes money from AI, why its technology is so important, and what this means for the future of the AI industry.

The AI Boom and Nvidia’s Role

Artificial intelligence requires enormous computing power. Training large AI models, like those used in chatbots, recommendation systems, and image generation, requires specialised processors capable of performing millions of calculations simultaneously.

This is where Nvidia’s GPUs (Graphics Processing Units) come in.

Originally designed for video gaming and graphics rendering, GPUs turned out to be perfect for AI workloads. Their ability to process many tasks at once makes them far more efficient than traditional CPUs for machine learning.

Today, companies building AI systems rely heavily on Nvidia hardware. Major technology firms, including Microsoft, Google, Amazon, Meta, and OpenAI, use Nvidia GPUs to train and run AI models.

As demand for AI continues to surge, Nvidia has positioned itself as a critical supplier to the entire industry.

1. Selling AI GPUs (The Biggest Revenue Driver)

The largest portion of Nvidia’s AI revenue comes from selling high-performance GPUs designed for data centres.

These chips are specifically built to handle complex AI training and inference workloads.

Some of Nvidia’s most popular AI chips include:

-

Nvidia A100

-

Nvidia H100

-

Nvidia H200

-

Blackwell AI GPUs (new generation)

These processors power massive AI data centres used by cloud providers and research organisations.

Why These Chips Are So Expensive

AI GPUs are significantly more expensive than consumer graphics cards. A single advanced AI GPU can cost $25,000 to $40,000 or more, depending on configuration.

Large AI data centres may require thousands of GPUs, which means companies spend billions of dollars purchasing Nvidia hardware.

For example:

-

Cloud providers build massive GPU clusters to support AI services.

-

AI startups buy GPUs to train machine learning models.

-

Research institutions use GPUs for scientific computing.

This demand has made Nvidia’s data centre division the fastest-growing part of its business.

2. AI Data Centre Infrastructure

Beyond individual chips, Nvidia also sells complete AI computing systems.

Instead of buying separate components, many companies purchase full AI servers or supercomputing systems designed by Nvidia.

These include:

-

NVIDIA DGX Systems

-

HGX AI servers

-

AI supercomputing clusters

These systems combine GPUs, networking technology, and software into powerful AI training platforms.

Large companies use these systems to build AI factories-massive data centres dedicated to training and running artificial intelligence models.

Because these systems can cost millions of dollars, they represent another major revenue stream for Nvidia.

3. AI Software Platforms

Hardware alone is not enough to build AI applications. Developers also need software tools to create and optimise machine learning models.

NVIDIA earns revenue by providing AI development platforms and software frameworks.

One of the most important is CUDA, Nvidia’s programming platform that allows developers to use GPUs for general computing tasks.

CUDA has become a standard in the AI industry because it allows machine learning models to run efficiently on Nvidia hardware.

Other Nvidia AI software platforms include:

-

NVIDIA AI Enterprise

-

Nvidia TensorRT

-

Nvidia NeMo

-

NVIDIA Triton Inference Server

These tools help companies:

-

Train AI models faster

-

Deploy models in production

-

Optimize performance

-

Scale AI applications

Many of these solutions are offered through enterprise subscriptions, which create recurring revenue for Nvidia.

4. Partnerships With Cloud Providers

Another major way Nvidia makes money from AI is through partnerships with cloud computing platforms.

Companies like:

-

Amazon Web Services (AWS)

-

Microsoft Azure

-

Google Cloud

-

Oracle Cloud

offer AI computing services powered by Nvidia GPUs.

Businesses that want to build AI models can rent GPU computing power from these cloud providers instead of buying hardware themselves.

While the cloud providers run the platforms, they still need to purchase large quantities of Nvidia GPUs to support their infrastructure.

As AI adoption grows, cloud providers continue buying more Nvidia chips, driving significant revenue.

5. AI Solutions for Specific Industries

NVIDIA is also expanding into industry-specific AI solutions.

Instead of just selling hardware, the company now provides specialised AI platforms for sectors such as healthcare, automotive, robotics, and manufacturing.

Some examples include:

Healthcare AI

NVIDIA develops tools that help researchers analyse medical images, accelerate drug discovery, and process genomic data.

Hospitals and biotech companies use Nvidia technology to build AI-powered diagnostics and research systems.

Autonomous Vehicles

NVIDIA has invested heavily in self-driving car technology.

Its Nvidia DRIVE platform provides AI computing systems for autonomous vehicles, enabling features like:

-

Object detection

-

Navigation

-

Driver assistance

-

Real-time decision making

Automakers and technology companies use Nvidia’s platform to build next-generation vehicles.

Robotics and Industrial Automation

Manufacturers are using AI-powered robots to improve productivity.

NVIDIA’s Isaac robotics platform helps developers create intelligent robots capable of performing complex tasks in warehouses, factories, and logistics centres.

These industry-focused solutions open new revenue opportunities beyond traditional chip sales.

6. AI Research and Innovation

NVIDIA also invests heavily in AI research and development, which helps the company stay ahead in a competitive market.

Its innovations often lead to new products that drive additional revenue.

For example, Nvidia has developed technologies such as:

-

Transformer Engine for AI models

-

Advanced AI networking

-

Next-generation GPU architectures

These innovations make Nvidia hardware faster and more efficient for AI workloads.

Because training large AI models requires enormous computing power, companies are willing to invest heavily in the latest hardware improvements.

Why Nvidia Dominates the AI Hardware Market

Several factors have helped Nvidia become the leader in AI computing.

Early Investment in AI

NVIDIA began developing GPU computing tools long before the AI boom. This gave the company a major advantage when machine learning started gaining popularity.

Strong Developer Ecosystem

Millions of developers use Nvidia’s software tools. This creates a powerful ecosystem that keeps companies invested in Nvidia technology.

High Performance Hardware

NVIDIA GPUs are widely considered the most powerful processors for AI training and inference tasks.

Continuous Innovation

The company releases new chip architectures regularly, ensuring it stays ahead of competitors.

Challenges and Competition

Despite its success, Nvidia faces growing competition.

Companies like AMD, Intel, and several AI chip startups are developing alternatives to Nvidia GPUs.

In addition, major technology companies such as Google, Amazon, and Microsoft are designing their own AI chips to reduce reliance on external suppliers.

However, Nvidia’s strong ecosystem and advanced technology give it a significant advantage in the market.

The Future of Nvidia and AI

The demand for artificial intelligence continues to grow rapidly. Businesses across industries are investing heavily in AI infrastructure, creating enormous opportunities for companies that provide the underlying technology.

NVIDIA is well-positioned to benefit from this trend because its products power many of the world’s most advanced AI systems.

In the coming years, Nvidia is expected to play a key role in developments such as:

-

Generative AI platforms

-

Autonomous vehicles

-

Smart robotics

-

AI-powered healthcare systems

-

Large-scale cloud AI infrastructure

As artificial intelligence becomes more integrated into everyday technology, the need for powerful computing hardware will only increase.

Conclusion

NVIDIA’s success in the AI era is not the result of a single product but a comprehensive ecosystem built around artificial intelligence.

The company makes money through multiple channels, including:

-

High-performance AI GPUs

-

Data centre infrastructure

-

AI software platforms

-

Cloud partnerships

-

Industry-specific AI solutions

By combining powerful hardware with developer tools and enterprise services, Nvidia has positioned itself as one of the most important companies in the global AI industry.

As AI adoption continues to expand, Nvidia’s technology will likely remain a cornerstone of the systems that power the next generation of digital innovation.